Summer 2014

Bitworld

Digital archeologist Jim Boulton explores the creative history of computer technology

Digital history begins with the birth of the computer. From Charles Babbage’s Difference Engine to the code-breaking machines of Bletchley Park, early computers were used to solve mathematical problems. In the wake of the Second World War, research units such as Bell Labs began exploring the creative potential of these machines. By the 1970s, computers had become part of everyday life – digital culture had arrived. However the first flickers of this digital revolution appeared during the previous decade or so as a direct result of the ‘Space Race’.

On 4 October 1957, the Soviet Union surprised the world by launching the first man-made satellite into space, Sputnik 1. Pride hurt, the US formed the Advanced Research Projects Agency (ARPA). Over the next decade, billions of dollars were poured into scientific research, eventually putting a man on the moon in the ultimate display of one-upmanship. However, space travel wasn’t the only beneficiary of this funding bonanza: a huge investment was also made in the emerging field of computer science.

ARPANET, the first incarnation of the internet, is the most famous outcome of this investment but ARPA’s legacy extends much further, pervading almost every aspect of digital culture. One of the main recipients of ARPA funding was the Massachusetts Institute of Technology (MIT). In autumn 1961, a PDP-1 mainframe computer was installed in the Electrical Engineering Department. The first thing the students did was create a game. They called it Spacewar! – the first computer game.

PDP-1 (1960), the machine on which the first computer game, Spacewar!, was made.

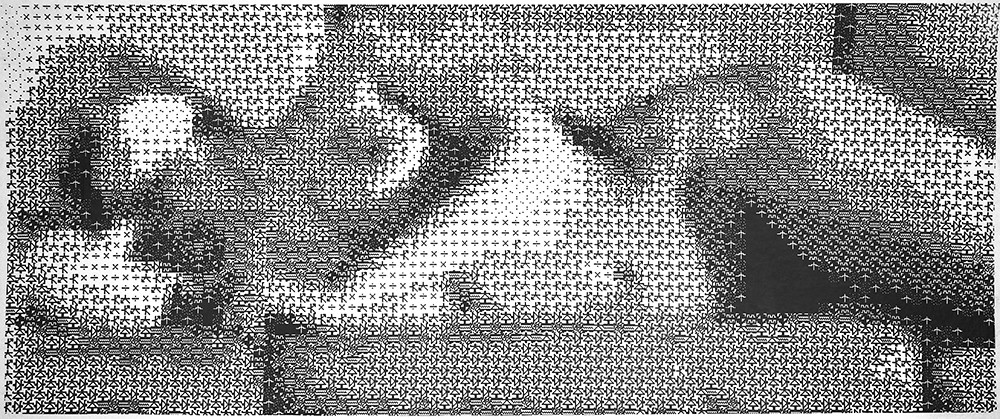

Top: Leon Harmon and Kenneth Knowlton’s reclining nude, 1966. An image of the dancer Deborah Hay was dissected into a grid and assigned an icon according to its halftone density.

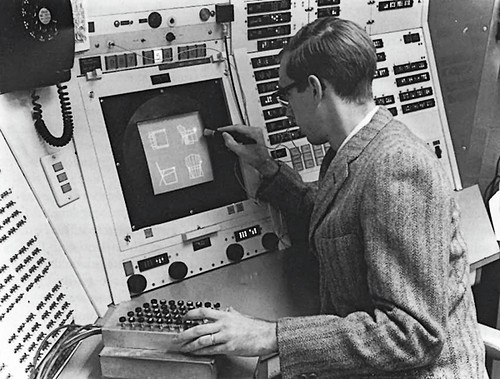

The next breakthrough at MIT was the Graphical User Interface (GUI). In 1963, PhD student Ivan Sutherland developed Sketchpad, the first computer-aided design tool. Incorporating the world’s first graphical interface and a host of other groundbreaking features, it was a stunning achievement. When asked, ‘How could you possibly have done the first interactive graphics program, the first non-procedural programming language and the first object-orientated software system, all in one year?’ Sutherland replied, ‘Well, I didn’t know it was hard.

Following his PhD, Sutherland joined ARPA to run the newly established Information Processing Techniques Office. He was given $15 million and told to ‘go sponsor computer research’. A number of computer science departments emerged as a result. Charles Csuri started a computer graphics programme at Ohio State University, where he produced Hummingbird, an early computer animation, consisting of 14,000 frames printed to microfilm and photographed. David Evans founded the Computer Science Department at the University of Utah, without which we would not have the Utah teapot, a seminal piece of 3D modelling. Another beneficiary was the Augmentation Research Center (ARC) at the Stanford Research Institute. Led by Douglas Engelbart, the ARC team built on Sutherland’s work, adding hypertext and the mouse. The oN Line System (NLS) was publicly revealed at the 1968 Joint Computer Conference at what became known as ‘the Mother of All Demos’, a demonstration that would change the face of computing forever. For an industry used to dealing with punch cards and command lines, a mouse-driven virtual desktop incorporating windows, menus, icons, links and folders was a gigantic leap.

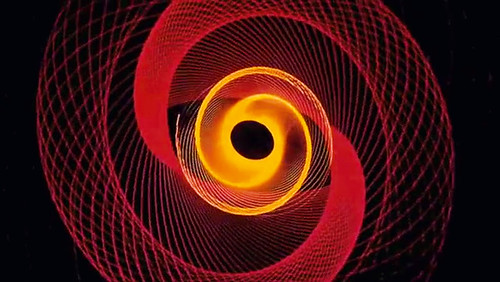

A frame from Saul Bass’s opening titles for Hitchcock’s Vertigo, 1958. John Whitney Sr’s slit-scan animations were created by a home-made analogue computer, anticipating digital algorithms.

Westerns, Pong and nudes

In parallel to the computer scientists, a wave of artists began experimenting with computer-generated graphics. Chief among them was John Whitney Sr. From his experience working with anti-aircraft guns, Whitney realised their control mechanism could be used like a giant spirograph. The resulting slit-scan animations were spectacular, most famously appearing in his 1958 collaboration with Saul Bass on the title sequence of Hitchcock’s Vertigo. Whitney went on to pioneer computer-generated animation using a similar algorithm-driven technique.

Bell Labs also benefited from a generous grant from ARPA. Between 1961 and 1967, programmers and artists including Leon Harmon, Ken Knowlton, A. Michael Noll and Lillian Schwartz worked together to create some of the first computer-generated artworks. Highlights include Ken Knowlton and Leon Harmon’s Studies in Perception I, the first computer-generated nude (1966), Lillian Schwartz’s Pixillation animation (1970) and A. Michael Noll’s Computer Composition with Lines (1964). The work of such pioneers was celebrated in 1968 by ‘Cybernetic Serendipity’ at London’s ICA, a landmark exhibition which demonstrated beyond doubt the creative potential of computers.

This potential had also been recognised by Ralph Baer, who came up with the idea of playing games on a TV set. By 1968, he had built a prototype he called the Brown Box. He licensed this ball and paddle system to Magnavox and it made its commercial debut as the Odyssey in March 1972, launching with a simple table tennis game. Nintendo secured the right to distribute the Odyssey in Japan and Atari were quick to build a coin-operated version, which they called Pong. Two giants in digital culture had taken their first steps. Baer had taken video games out of the computer lab and into the home, inventing a new entertainment medium in the process.

The work of pioneering computer artists and video game designers caught the attention of author turned film director Michael Crichton. In 1973, he approached John Whitney Sr for help with his first Hollywood film, Westworld. Whitney turned the job down but his son, John Whitney Jr, took on the challenge. Working with the technology company Information International, Inc. – known as Triple-I – he proceeded to create Yul Brynner’s pixellated robot vision: the first computer-generated imagery (CGI) to appear in a film.

The first use of CGI in a Hollywood movie: frames from Westworld, 1973, written and directed by Michael Crichton. The robot sharpshooter played by Yul Brynner sees his quarry as a pixellated outline. Effects by John Whitney Jr.

The new Sketchpad is a Mac

John Whitney Sr also had an indirect hand in the next CGI milestone. Larry Cuba, his collaborator on the seven-minute-long 1975 masterpiece Arabesque, went on to create the Death Star briefing sequence in Star Wars, the first extended use of CGI in a film. George Lucas immediately saw the potential, setting up a computer division within Lucasfilm to create the special effects for The Empire Strikes Back. Within this division sat the Graphics Group, which eventually evolved into Pixar, taking CGI to infinity and beyond.

Meanwhile, several of Engelbart’s team had gone on to develop the groundbreaking Xerox Alto. But it would not be Xerox that steered the course of modern computing. On a visit to Xerox PARC (Palo Alto Research Center), Steve Jobs saw the GUI at first hand. In 1983, Apple launched the Lisa, the first home computer with a GUI and mouse. The next year came the Macintosh. The image used to promote the machine was a digital version of Hashiguchi Goyo’s woodblock print Woman Combing Her Hair. The image had been made in MacPaint, Bill Atkinson’s pioneering graphics package, which owed much to Sketchpad. It said all that needed saying about Apple’s new computer: this was more than a calculating machine – it was a creative tool.

Thirty years on, with multi-player gaming, the Web and the smartphone, the digital world has been integrated into every aspect of our lives. Engineers invented the medium, but artists and designers shaped it. Thanks to them, digital culture has become simply culture.

Jim Boulton is an advisor to the exhibition ‘Digital Revolution’, Barbican Gallery, London, from 3 July to 14 September 2014. His book 100 Ideas that Changed the Web is published this August.

A computer operator using Sketchpad in 1963, the first program to use a graphical user interface.

Jim Boulton, digital archeologist, London

First published in Eye no. 88 vol. 22 2014

Eye is the world’s most beautiful and collectable graphic design journal, published quarterly for professional designers, students and anyone interested in critical, informed writing about graphic design and visual culture. It is available from all good design bookshops and online at the Eye shop, where you can buy subscriptions and single issues. You can see what Eye 88 looks like at Eye before You Buy on Vimeo.