Summer 1998

Drag me. Click me.

A sprinkling of icons may make for a pretty interface, but many of them are opaque and meaningless

In front of me is a “window” with seven icons and one button ranged across the top. On the left, there are three squares with a drop shadow. The first is empty, the second has a fuzzy scribble that looks like the initials ZH transcribed into an early version of MacPaint, and in the third the words BIN and HEX are neatly arranged one above the other like the sign for a fashionable eatery. I know what BinHex means, though, so of the three I can at least grasp its intention. The other icons, which do not appear in squares – but instead are evenly spaced across the top of the window (in distinction to the first three?) – are, if it is possible, even more opaque. One has a tick with the initials QP. QP? Nothing comes to mind. Its neighbour depicts a miniature Mac Plus – a machine I remember with some fondness – juxtaposed on top of the familiar “document” icon with the folded corner. I wonder if this is what Koestler meant by “bisociation,” but this does not help its meaning to become any clearer. The next has another tick, this time against a document with a curving arrow that seems to suggest it should be turned back to front. Still no clue.

The last has two documents, one above the other. Their pages are as delightfully empty as my mind. “Go on then,” I think, “switch on balloon help.” I am looking at the composition window of Eudora Light – a popular mail program – in the version for Apple Macintosh.

Sometimes design seems to be more about some sort of Freudian externalising of a dark, mysterious interior than it is about rational problem-solving. Composing and sending email is – like other computing operations – a straightforward, logical operation. Why then did the designer of this particular program, like so many others, choose to represent its functions by such a bewildering array of images when a few well-chosen words (like those I am forced to summon in balloons) could make them immediately clear? I can only account for this by reference to a deep, perverse streak of human atavism.

Icons are a ubiquitous feature of the computer landscape, one of the four pillars (together with windows, pointers and the mouse) that underpin the whole notion of the graphical user interface (GUI). The conventional – and largely unquestioned – wisdom makes icons synonymous with warm adjectives such as “intuitive” and “user friendly.” But are they what they are claimed to be? Or are they the flying ducks of the information age – gratuitous ornamentation that intrudes into both the usability and aesthetics of the computing environment. Few applications, from operating systems to websites, seem complete without several shelves of this virtual bric-a-brac, like decorative china in an olde world tea-shop. Yet we talk as if this unstoppable urge towards the twee was a breakthrough in the ergonomics of the human computer interface. What a funny species we are . . .

An article of faith among interface designers holds that there are “verbal” and “visual” people – the latter something of an historically oppressed minority who, through the graphic potential of new media, are at last being given the opportunity to engage in the “information revolution.” This view, held with extraordinary tenacity in the interface and information design communities, is behind much of the vigorous promotion of icons for interactive applications.

Yet here am I (presumably not alone), a graphic designer by trade and temperament, finding myself increasingly “visually challenged” by the iconic language of the programs I use. How come?

Like most unexamined assumptions, the basis for the idea that people respond to computer applications in such profoundly different ways turns out to be a concatenation of anecdotal evidence, popular misunderstanding and wishful thinking. I have long wondered where the hard science to substantiate this arbitrary division of humanity into visual and verbal might be, since nothing I have come across in the cognitive sciences comes anywhere close. There are echoes of Howard Gardner’s 1983 “Frames of Mind” – but not in any form he would recognise – as there are of the right brain/left brain dichotomy popularised in Robert Ornstein’s 1973 Psychology of Consciousness. But even Ornstein is concerned that these ideas have been taken out of context and totally exaggerated. In his latest book [1], he is at pains to point out that instead of people bifurcating into “left brainers” (verbal types) or “right brainers” (visual types), many of those categorised as “right brainers” simply have undeveloped or atrophied left hemisphere skills – pointing to the failure of our education system to achieve adequate levels of literacy, rather than any enlightened encouragement of visual thinking. “The right-hemisphere specialisations develop to their fullest when informed by a fully developed left side. Otherwise we get form without content.”

Even if we credit the visual/verbal idea as a hypothesis – which strikes me as over-generous – it raises some difficult questions. Since most computer use is concerned with manipulating alphanumeric information (word processing, spreadsheet calculation, database interrogation and email), does this not suggest that most of the users will tend to come from the “verbal” camp? So should not images be the alternative, rather than the default means of calling functions? And why are icons the answer for those who see themselves as visually inclined – surely the different organisation of their dominant mode of thinking needs to be catered for by something better than a skin-deep sign? In the dizzy world of new media, however, such questions appear deeply heterodox.

Difficulties with icons

There are a number of objections to an iconic approach: functional, semantic, aesthetic and philosophical. Before exploring these, however, it is worth considering what icons are. Despite the name, with its resonant overtones of the sacred and profound, icons are surprisingly prosaic. By and large – although this is not an exhaustive taxonomy – icons fall into three categories. There are those that represent the encapsulation and organisation of data: documents, folders, disks etc. These could be called “nominal” icons – icons that present difficult-to-conceptualise or largely intangible things in terms of familiar items. Then there are icons that represent tools, often by turning the cursor into a parody of a physical tool such as a scalpel or a pencil. For the sake of classification, we can call this small but significant group “functional icons.” Finally, there are icons that represent destinations – although this is something of a conceit since nobody actually goes anywhere. This last category, thanks to the explosive growth of the Web, is now the largest and most diverse. The kinds of visual treatment are correspondingly simple. Icons are either symbolic, associating an abstract function with a familiar, physical equivalent, or they are heraldic, carrying the impress of proprietorship. Signposts and trash cans are examples of the former, while application icons (and their accompanying document icons) are examples of the latter. Interestingly, the principle that “a picture is worth a thousand words” does not apply here – icons invariably represent only one thing; any element of ambiguity involves an accompanying loss of clarity.

Functional problems with icons

In a Wired interview [2] with Jimmy Guterman, information design guru Edward Tufte describes computers as “just about the lowest resolution information interface known to humankind, compared to the map, the photograph, printed text or the brain. You have to do special things in a low-resolution world: not waste pixels, use anti-aliased typography, give all the space to the user because it is so precious.” Tufte goes on to say that “icons are pretty inefficient” – explaining how, in one instance [the opening screen of Norton Desktop for Windows] the repetition of a single icon wastes 4,000 pixels on the screen to present “. . . one piece of information, one single noun . . ..”

Although the screen is not entirely kind to text, words are often more efficient than images in terms of the amount of space they take up. Many icons are also accompanied by a caption – this is invariably the case with what I have called nominal icons, the icons that inhabit our desktops and file management windows. In these cases, the image is almost completely redundant. Its only residual functions are to differentiate it in kind from other similar entities (a function some computing environments achieve more efficiently, but slightly less elegantly, with file extensions) and to give it a sense of “thingness.”

Presenting the same information twice, visually and verbally, smacks of a profligate redundancy in an environment still constrained by coarse resolution and limited image area. It can only really be justified by the visual person/verbal person theory – where one is supposedly translating the same information for two different kinds of minds. However, the constraints mean that screen designers are constantly having to make compromises and sacrifices. Many find the wasteful but decorative icon harder to let go, and it is more common in interface design to find rows of icons without captions than unadorned hypertext links – a clear signal of where the designer’s sympathies lie.

Semantic problems with icons

Edward Teller, in his celebrated critique of the downside of technology, Why Things Bite Back [3], describes how iconic representation has gone from being what he considers a radical and brilliant way of enhancing usability to a virtual Babel:

“The re-complicating effect is that while some commands and programs are much clearer as symbols than as words, others are resolutely and sometimes inexplicably non-graphic. (The world has at least two serviceable Stop designs, but still no decent Push and Pull symbols for doors) . . . It probably doesn’t matter too much that Apple Computer has copyrighted the Macintosh icon of the garbage can as a symbol to which unwanted files can be dragged for deletion. Windows software uses a wastebasket instead. But what does a shredder mean, then? Does it discard files, and/or does it do what paper shredders are supposed to: make the original text impossible to recognise or reassemble? And some symbols mean different things in programs written for the same operating system. A magnifying glass can call for enlarging type as it does in some Apple software, but it can also begin searching for something or looking up a file in Macintosh as well as in Windows applications. A turning arrow can mean Rotate Image, but a similar arrow can denote Undo; Microsoft tried a hundred icons for Undo and finally gave up.”

Donald Norman [4], on the other hand, cannot see what all the fuss is about: “Icons. Lots of people worry about the design of icons. I can’t understand them. What does that symbol mean? What does this symbol mean? Bad design they say. No, no, I don’t think it’s bad design. There’s no reason why a visual icon should be understandable when you first see it. The real trick is to make it so that when somebody explains it to you, you say ‘ah, I got it,’ and you never have to have it explained again. So a good design means you explain it only once.” Norman’s point of view is perfectly reasonable in the context of an operating system or an application, given that there is “somebody” to explain it to you – and that you use it regularly. But even icons that you have had explained can become obscure if you do not use those features every day, as my Eudora experience shows.

When the same insouciance is applied to the design of a website – which for the majority of “visitors” is a one-off, unmediated experience – the sense of frustration and disempowerment can be an order of magnitude greater. One of the most commonly heard arguments in favour of icons is that they allow for a truly cross-cultural visual language. Just as pictograms have become part of the way we communicate without words, so icons help the user navigate the computer interface regardless of her native language. Or so the theory goes. Pictograms have become a useful way of communicating certain kinds of information – although it is salutary to realise quite how many learnt associations we have to make to be able to understand them. Some kinds of messages do lend themselves to literal presentation: the car falling off the road into the water will be understood by most drivers as a vivid demonstration of a potential hazard. But others, such as the red warning circle, are entirely arbitrary conventions. Much of the richness of language comes from its vocabulary of abstractions – things that by definition do not lend themselves to depiction. With pictograms we are forced to adopt a way of representing abstract concepts which lose all the advantages of immediate recognition, and require prospective users to learn new graphic languages – in addition to their spoken and written languages. Nor are these languages particularly “intuitive” – ask any driving instructor how many candidates fail their driving test because of faulty recognition of road signs.

Another argument that is advanced in favour of icons is our greater facility for memorising images. This was, of course, the gist of the classical art of memory – and the basis for the scintillating stage performances of “memory men.” The use of images as mnemonics is indeed very clever, but it requires considerable effort – to say nothing of skill and patience – on the part of the practitioner. And it is hard to see how this connects with the ostensible purpose of icons, since one of the avowed intentions of the user interface is to give us immediate feedback and not to require us to remember anything.

Issues of meaning and ambiguity are at the heart of the problem with icons. Our instincts naturally draw us towards the figurative potential of the image. But there is little scope for encouraging multiple readings in presenting what is, in effect, a control whose narrow meaning has been fixed not in design but in the rigid logic of programming. And it is this definite denotation that makes me wonder about the arguments about the “visual” user. If there really are such people whose dominant mode of perception is the direct, imaginal mentation that the rest of us use only occasionally, do they really benefit from the picture as single noun? Or would they insist, like William Blake (verbal or visual person?) that meaning be “Twofold Always! May God us keep, from single vision and Newton’s sleep”?

Aesthetic problems with icons

Someone once told me –on what authority I am not sure – that one of the reasons our computers require us to do so many “physical” things on screen (pushing buttons, twiddling knobs, pushing sliders) is that North America never developed a tradition of building automata. Had the personal computer come from the old world, the hardware would have allowed us to do these things manually. I do not know about the historical accuracy or veracity of this observation, but it does explain what is for me one of the most excruciating aesthetic deficiencies of the graphical user interface – the way it relies on simulations of the tactile controls of other technologies. Since icons are most often buttons, this reliance manifests itself in awkward attempts at trompe l’oeil: bevelled edges, drop shadows, “depress modes,” click sounds and the like.

The aesthetic problems do not end with mechanical emulation. There is also the issue of consistency. Interface designers devote a great deal of time to producing environments that have a visual coherence – a distinct graphic personality. But their efforts are undermined by the way that these environments are nested within other environments – each with its own conventions and approach. Inside the desktop, with its own distinctive iconography, may be a browser with an entirely idiosyncratic visual treatment. And inside this browser is a website, with a third type of graphic approach. Like a Russian doll, the website may turn out to be a frame-set, containing yet further sites and styles. What each designer intended as a congruous aesthetic experience for the user has become a riot of jarring colours, images and treatments. Rather than trying to resolve these tensions within itself, however, we now find the aesthetics of interface design bursting out into other media. Prompted by what I’m tempted to call “icon envy,” there are now televisual treatments that scream “click me!” – even though there’s nothing to click with. And in a bizarre reversal of the claim that screen design is being held back by the conventions of older media, we also increasingly find this tendency in print – where rows of icons taunt the reader with the promise of an interactivity they cannot actually deliver.

Philosophical problems with icons

Conceptually, icons fall into a kind of no-man’s land between the resolutely modernist positivism of Neurath’s Isotype and the postmodern “reader-centred” semiotics that derive from de Saussure and Pierce. Both schools lay claim to them, no doubt attracted by their enthusiastic take-up in the new media. But in reality they are neither fish nor fowl: not “positive,” observation-based characters; not multiply referential signs.

In Neurath’s scheme, pictorial representation can bypass the ambiguities and imprecision of language, appealing to an entirely empirical, logical but wordless capacity of the mind through the mediation of the eye. Needless to say, it is difficult to recognise our “visual” person as a Vienna Circle Positivist, as Neurath would have liked to have seen him or her. This perspective has been highly influential in informing the discipline of information design, which still asserts a defiantly Positivist view of communication based on concepts of “clear” communication, “plain” language and diagrammatic representation. And information design is one of the most potent forces shaping the new media, a comfortable bedfellow with the enthusiastic scientism that drives the “technological revolution.”

From the Saussurian perspective, signs have an arbitrary (or unmotivated) connection to the things they signify. Icons, thus, are tokens or pointers, which take up their role within an intricately interwoven system of “differences,” and whose connotations depend upon cultural and other readings. This is a beguiling theory that has been eagerly taken up by the more expressive tendency in graphic design.

I have a hard time, though, imagining the genesis of language as a Positivist – or even a Saussurian – naming exercise. [For example, one caveperson to another: “Here is another thing. Let us call it ‘tree.’”] It is far more likely that language evolved first in what Martin Heidegger described as its “disclosive” aspect – that is, as our remote ancestors became ever more self-conscious, their awareness of the world became populated with linguistic concepts and, vice-versa, as they developed language, they became more aware of the “thingness” of their environment. In The Way to Language [5], Heidegger points out that the disclosive aspect of language is both prior to, and considerably more important, than its representational aspect.

Added to this, there is the metaphorical character of language. The nineteenth-century view (epitomised by such figures as Müller, Humboldt and de Saussure) was that language was originally descriptive and only later (as in the Homeric period) figurative. But, as Owen Barfield [6] points out, all the evidence is to the contrary – the earliest records of language, and the most “primitive” languages, are profoundly metaphorical. It is only recently that words begin to be used in a purely descriptive, representational sense (with the irony that their etymology often preserves their original metaphorical status).

When it comes to the way we relate to our computers, the idea that our world is disclosed to us through the medium of language – and that language is itself inherently metaphorical – becomes highly significant. We understand computing through the mediation of persuasive metaphors that supplant any conception of what we are actually doing with a profoundly anthropomorphic construction, where logical entities composed of bits become embodied in the images of things familiar to us from the world of atoms. Under the influence of this metaphor, we can convince ourselves that we are “dragging a document” into the “trash,” even though the operations that are actually happening in our computer’s memory or CPU bear little resemblance to these mundane analogies. Similarly, we are induced to believe we are “surfing” through “cyberspace” while we sit dispassionately, twitching our mouse and staring hypnotically into a two-dimensional screen. It is an intimation of the hyper-real world Jean Baudrillard describes, where signs are no longer used to point to, or even conceal, reality, but to conceal the absence of anything we could recognise as reality.

In the beginning was the word

These reflections lead me to wonder whatever happened to “hypertext” – the idea that words could “do” as well as “say,” while remaining located contextually within a narrative. Replacing images with words does away with most of the problems with icons, returning the computing environment from the make-believe world of manipulating tokens to something that can be more closely correlated with what actually goes on under the hood. Since the bulk of information we access with our computers is still predominantly verbal, it seems surprising that this has not been more widely explored. The book became an extraordinary cognitive and aesthetic artefact despite its predominantly verbal content – why not the Web page?

Words seem to be disparaged in the new media – perhaps because the people who are involved in shaping it have so little sympathy for them. This is particularly ironic, since words can often have the resonances, ambiguities and figurative dimensions of which icons – in the few pixels allotted to them – are incapable. It is also sobering to reflect that while the Internet was limited to seven-bit ASCII text, it gave rise to all sorts of experimental forms – including the idea of the virtual community. Since it has been able to accommodate images it has simply mutated into a giant marketing billboard.

Yet I do agree that a sprinkling of icons can make a computer application look pretty. Just don’t expect me to understand them.

Footnotes

1. R. Ornstein, The Right Mind: Making Sense of the Hemispheres, New York: Harcourt Brace & Company, 1997, page 95.

2. “Envisioning Interfaces” in WIRED 2.08, August 1994, pages 60-61.

3. Edward Tenner, Why Things Bite Back, London: Fourth Estate, 1997, pages 195-6.

4. Donald Norman, Defending Human Attributes in the Age of the Machine, New York: Voyager, 1994, p. 1110. (CD-ROM).

5. Martin Heidegger, edited by David Farrell Krell, Basic Writings, London: Routledge, 1993, pages 394-426.

6. Owen Barfield, Saving the Appearances: A Study in Idolatry, Middletown, CT: Wesleyan University Press, 1988.

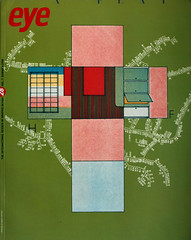

First published in Eye no. 28 vol. 7 1998

Eye is the world’s most beautiful and collectable graphic design journal, published quarterly for professional designers, students and anyone interested in critical, informed writing about graphic design and visual culture. It is available from all good design bookshops and online at the Eye shop, where you can buy subscriptions and single issues.